Ariel Virtual Computer

June 10, 2021⚑Ariel

When I was a teenager, I only had access to a Commodore 64 at home. I envied my friends who had a PC or even an Amiga, for the seemingly unlimited RAM and crisp high resolution text display. Glorious 80 columns! At one point I wrote a Bash-like shell on my C64 with 80 character software display, each character had to fit into 4*8 pixels. It looked surprisingly good on my monochrome monitor, considering, but it was slow and I barely had any RAM left for programs to load due to all the space the shell functionality used up.

Later I got an Amiga 500 and I was happy with it text-wise, it could display a proper 640*200 screen with 80 columns of text. It was great for logging into a remote server via telnet using a modem (back then it wasn't considered harmful) where I had an account and I could use email and IRC. Writing assembly and C code on the Amiga was great and I had 1 MB RAM in it, which felt like tons. I nurtured a dream for years that I would get an Amiga 1200 with a hard disk in it and I could write a whole OS for it in assembly. That dream was never realized. When I got a proper PC I was writing programs in C. Making an OS didn't seem plausible due to the complexity of the CPU and the hardware itself. Sure I wrote some assembly code with BIOS interrupt calls but making anything serious as bare metal code is pretty hard on a PC, especially these days.

A few years ago I thought the Raspberry Pi will be great for such aspirations, but while the ARM CPU itself is OK to code for, the hardware is just way too modern, and in the same time surprisingly badly documented. Sure, making the Status LED blink was doable, but even that has sailed with the Pi 4 as I heard. Putting text on the screen is hard due to working in graphics mode (good luck even making a frame buffer with all those badly documented mail slots), accessing the SD card is hard, writing a whole FAT32 file system driver is really hard, writing a USB driver for keyboard handling is super hard. Using the GPIO pins to access the Pi via serial port is doable, but then you are still just using a terminal program to access a box, and for every change in the kernel code you need to copy data to the SD card and reboot, or use QEMU for everything, and... Small wonder that most "Let's make an OS for an ARM SBC" projects die at the stage of a blinking LED.

Some dreams come true

My dream computer would use a 32-bit CPU that is similar to ARM, but less complicated to work with, so it's somewhere between the 6502 and the ARM V6. Lots of RAM, up to 4 GB due to the 32-bit address space, but realistically I would never need that much. Easy enough access to the hardware using Hardware I/O registers. Unified memory architecture if I would ever have video and audio in it. A smart disk controller device that would give access to the file system in a high level way, so its firmware would handle the complicated block level tasks. A serial console port solution to access the system using a terminal program.

Since nobody is going to make it for me, and I'm just a software guy, I decided to make my own computer as a virtualized entity. It's called Ariel.

I created a small Virtual Machine in C# that can run a RISC CPU I designed and some virtual hardware devices I made. The ARM-like CPU is something that would work in real life, it could be implemented as an FPGA core, and the devices are either realistic or plausible. The VM can run code over 12 MIPS (million instructions per second) on my PC; this is comparable to a speedy Intel 486. Plenty of power for my needs, I would be fine with just 1 MIPS, and it's not particularly optimized code. Also this is just a number, I believe the Ariel CPU packs a bigger punch than a 486 when it needs to run useful code.

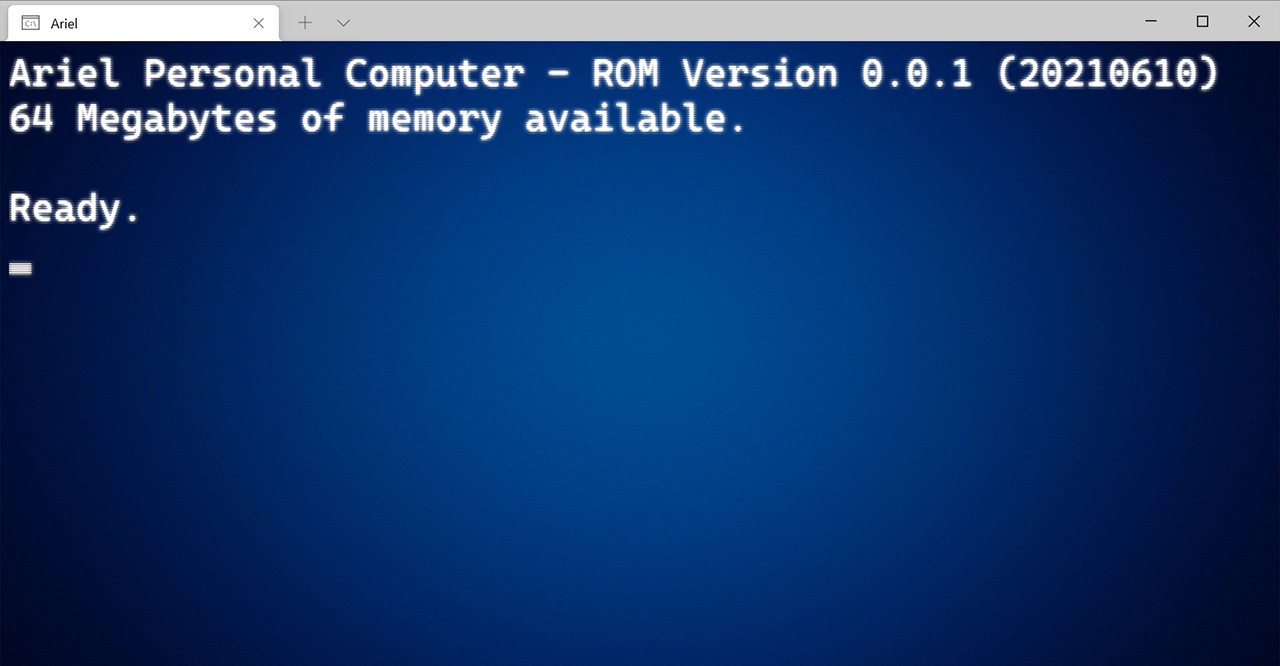

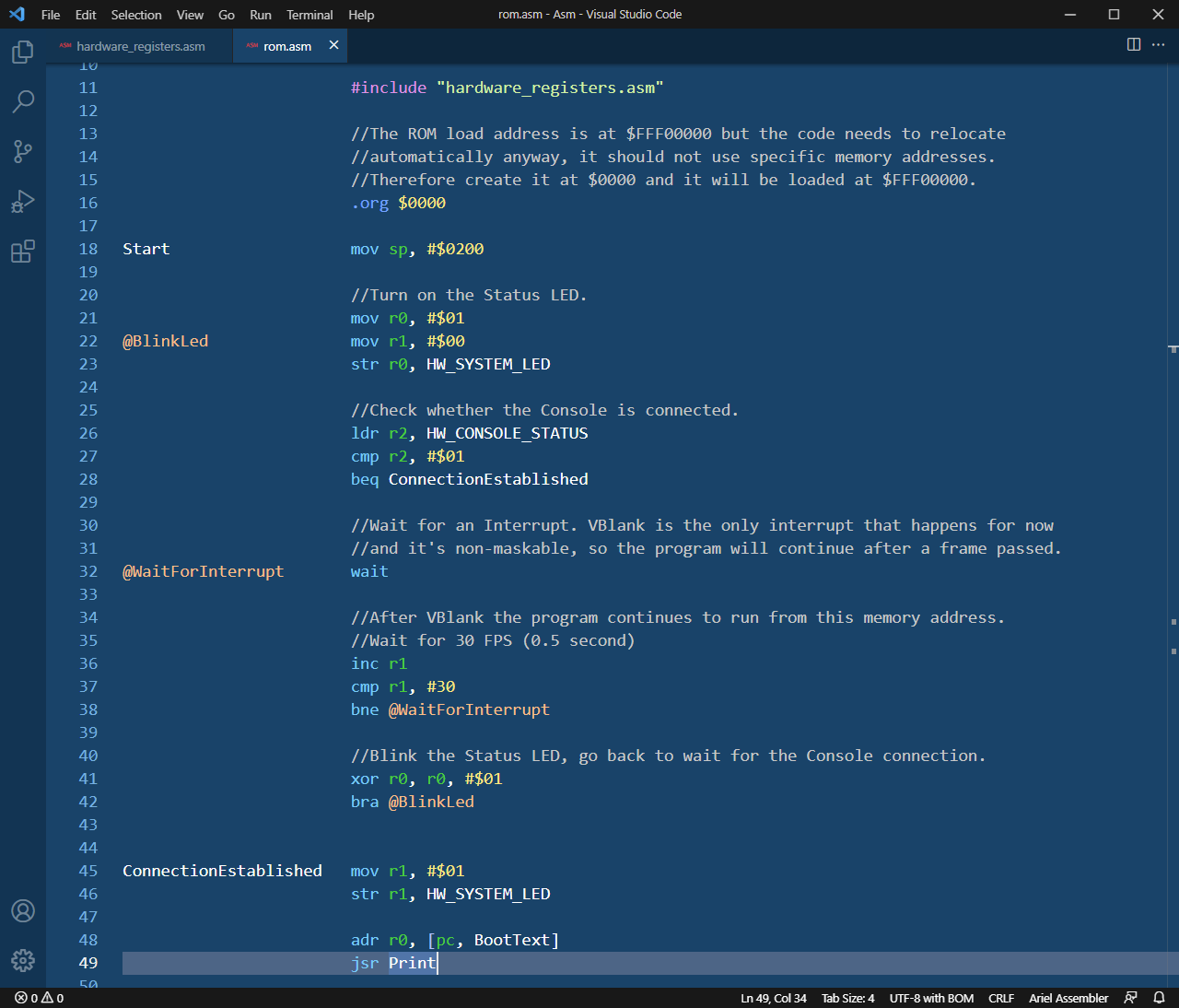

I wrote my own assembler for it, along with a Visual Studio Code extension. It works like a charm, in some aspects it's even better than Retro Assembler. Ariel boots a ROM image that is really simple so far, and afterwards the ROM will load the Kernel from the file system. The RAM size is configurable, it can be up to 4095 MB. The top 1 MB is the Hardware Space, and the top 64 KB is the Hardware I/O Space for the hardware registers that give me access to devices. The VM emulates the serial console port using a TCP Socket, so I can connect to it via Telnet on a specific port, even via the Internet.

What is it good for?

- Primarily it's for me. Making my own CPU and hardware devices, albeit virtually, is a great challenge and fun for an engineer. I enjoy it immensely and I haven't played with many video games since I started this project.

- I made an assembler for it, that was and still is a really enjoyable pastime.

- I can write my own ROM for it that already starts the computer and allows for terminal communication.

- I can write my own OS for it, finally.

- I can write interpreters or compilers for various languages like Basic and Python, and I can even write a small C compiler, because compilers are fun to make. Making those to create x86 or ARM code is hard and realistically nobody else would use them, so I might as well just generate code for my own CPU.

- I can write a C# -> IL -> Ariel Assembly compiler so I can write complex applications for it in Visual Studio, if I choose to do so.

- Later I can add a video device to it and even audio.

- Others who would like to dabble with writing bare metal code in assembly, ROM code or even a kernel, could use it and enjoy working on it, without the endless frustration of using real modern hardware.

- I plan to put it on GitHub when it's a bit more mature, so others could contribute, or even modify it for their own needs if they want to.

- Somebody could just make a ROM that starts up a Basic interpreter, like in the Commodore 64. I might be that somebody, some day.

If you're interested in this project, and would like to try it later, and perhaps even would like to write some code for Ariel, let me know what you think on Twitter. I would appreciate it. I'll follow up on how this project is going when there's more to share.